“The master's tools will never dismantle the master's house.” — Audre Lorde

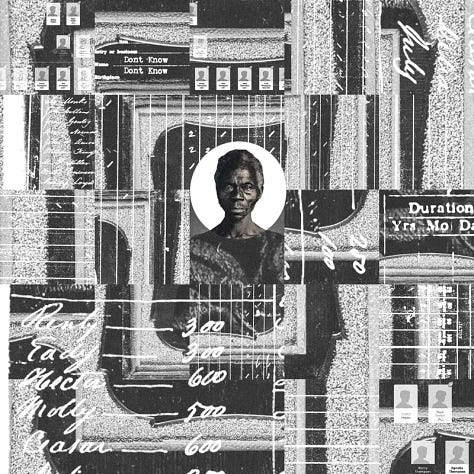

Before they came for us in code, they came for us in chains. Before the lens scanned our faces, it studied our skulls. Before the algorithm flagged us as risk, the overseer named us property.

And so here we are again, not in cotton fields or auction blocks, but in dashboards and datasets, our lives compressed into metadata, our fears mapped and monetized by technologies draped in the language of neutrality. We were never meant to survive, and yet our survival has always been too loud, too bright, too Black for the systems built to forget us.

It is a curious thing, this country’s obsession with progress. The same nation that dismembered Black life to build its wealth now baptizes its machines with promises of equity and efficiency. But when you look closely, when you truly look—you see that the same old gods have been uploaded into new temples. The plantation is not gone; it has simply upgraded its software.

The algorithm, like the lash, does not ask you your name. It does not care for your dreams. It scans your skin and assigns a probability. It surveils your neighborhood and predicts a crime. It silences your protest and sells your rhythm back to you through an influencer with less melanin and more followers. This is not innovation—it is a remix of control.

But we, the children of escape routes and coded songs, know how to speak in frequencies they cannot track. We’ve always known. We were making technology long before Silicon Valley was mapped, grinding freedom into spirituals, encrypting joy into juke joints, building communication networks through quilts, churches, and front porches. We are not new to this surveillance. And we are not waiting on liberation to be programmed into a new app.

What follows seeks to trace the ways in which digital tools from facial recognition to predictive policing—do not simply reflect anti-Blackness, but reproduce it. It calls upon the lens of racial capitalism, as laid bare by Cedric Robinson, to reveal that these technologies are not broken; they are doing exactly what they were designed to do.

And yet, always yet—it also spotlights the Black technologists, artists, and freedom dreamers who are not merely resisting these systems, but building beyond them. Because survival alone is not enough. We have more than endured. We are remaking the code.

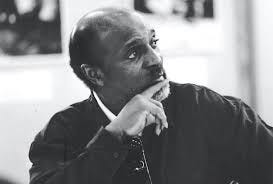

Cedric Robinson told us the truth plain: capitalism did not emerge in spite of racism, but through it. It was not colorblind, not some neutral engine of industry corrupted later by bias. No, from the very beginning, capitalism was racial. Its foundation was not merely extraction, but the sorting of human worth by phenotype, by lineage, by proximity to power.

And what was true in the age of empires and auction blocks remains true in the age of algorithms.

The mistake we often make, especially in this country where forgetting is policy—is believing that new tools mean new values. We dress up the machine in modern language: efficiency, innovation, objectivity. But machines are not born clean; they inherit. And, like all inheritance in this nation, they are steeped in the blood of the unequal.

The modern algorithm, that god of so many industries, is not some neutral instrument floating above history. It is a scion of empire. It was built by hands that never saw us as fully human. It was trained on data that remembers our deaths more than our lives. It was optimized for profit, not for justice.

Look closely and you’ll see: today’s predictive policing is tomorrow’s slave patrol reborn in code. Risk assessments in the courtroom are simply new ledgers in the old language of control. Even the convenience of the consumer world, those slick apps that recommend what we might buy, watch, listen to are built by scraping the same Black creativity this country once beat, jailed, or stole. TikTok is a ring shout with its bones pulled out. Spotify is a cotton gin for culture.

And so we arrive at what Robinson might call the digital plantation. A vast network of surveillance, extraction, and erasure that feeds on Black life while pretending not to see it. Here, the overseer does not ride a horse, he rides a signal. The fields are not cotton, they are servers. The labor is not waged, it is watched. And the lash is not leather, it is the click, the scan, the algorithmic score that trails us like a ghost we never asked for.

What Robinson gave us was a lens, a method of seeing that tears the veil from these structures. He taught us that to speak of technology without history is to speak in fantasy. That the architecture of our world has always been rigged to serve empire, and that resistance begins by naming what the empire dares not speak: its own design.

And so this section of the journey is a study in inheritance. A tracing of how the master’s house has been digitized. How the logic of domination has learned to wear the mask of math. How, unless we choose otherwise, the plantation will keep evolving, but never disappear. Because the truth is this: the algorithm is not broken. It is working exactly as intended.

You do not need bars to build a prison.

Sometimes, all it takes is a pattern of numbers, a grainy scan of a face, a set of coordinates on a block long condemned. Sometimes, the jailer wears no uniform, he wears a badge made of code.

The American carceral state has always been a master of reinvention. From plantation to penitentiary, from chain gang to stop-and-frisk, from the sound of the whip to the sound of the siren, its genius lies in adaptation. Now it has found its most seamless disguise: the algorithm.

Consider the camera that watches not to see, but to predict. The lens that scans your skin, not to know you, but to assess your threat. Facial recognition software, hailed as neutral and efficient, has misidentified Black faces with an error rate that borders on farce. Robert Williams, a Black father in Detroit, was arrested in front of his children because the software said he looked like someone else. It was wrong, and it didn’t matter.

This is not coincidence, it is consequence. The datasets that train these tools are soaked in bias. They are fed a history where Blackness is already criminal, already suspect, already deviant. The machine does not see a person, it sees a probability. And that probability is drawn from archives of punishment, not protection, but facial recognition is only the entry point.

There are algorithms now that tell police where to patrol before a crime has occurred, predictive policing, they call it. A science fiction fantasy made real, where past arrests become the blueprint for future surveillance. But when the past is distorted, when it reflects decades of racial profiling then prediction becomes self-fulfilling prophecy. The algorithm doesn’t predict crime, it predicts contact with the police, it patrols where Blackness lives.

Inside courtrooms, risk assessment tools determine who sits in jail and who goes free. They claim to be impartial. But what they assess is not character, it is history. And Black folks, historically, have been handed harsher sentences, denied bail, over-policed, under-protected. The machine absorbs all of this. And when asked who to trust, it tells the judge: Not them.

Even in schools, children are being assigned “threat scores” based on behavior, attendance, zip code. Imagine a system where a ten-year-old Black boy who missed breakfast, whose mother works nights, is deemed high-risk before he even speaks. The school calls it intervention, but we know it as early sentencing.

The algorithm, then, is not innovation—it is memory, it is the state’s memory of who it has chosen to punish, it is the plantation reborn—not with chains, but with checkboxes and scores, digital phantoms that haunt Black life at every turn. And still we are told these tools are progress.

But what is progress, if it perfects the harm? What is progress, if it automates the very violence we have spent generations trying to survive? This is not the future—it is a faster version of the past. And we are expected to be grateful for the speed.

To be Black in this world is to be watched. Not with the wonder of curiosity, but with the precision of suspicion. Not with the intimacy of care, but with the hunger of a state that does not see a life, but a liability.

In the digital age, this watching has gone deeper, it has gone colder. The gaze has become code. And in that coldness, Blackness is not merely visible—it is flagged.

Black creators know this already. They dance and the platform shadows them. They organize and the feed buries them. They speak of justice, and the algorithm hears threat. It is not accidental, it is design. What used to be the classroom suspension, the stop-and-frisk, the “random” search, has now become content moderation. Censorship dressed in sleek interfaces, branded as safety.

We are told these systems are colorblind. But they are trained on the culture of the dominant. They misread African American Vernacular English as aggression. They label resistance as incitement. They detect joy in brown skin as noise, not signal. A Black woman laughing too loud becomes a risk. A drag queen performing becomes inappropriate. A protest becomes hate speech, but only when we are the ones posting.

Meanwhile, others profit off the very thing we are punished for. White influencers mimic Black slang and rhythm for clout and sponsorships. AI tools generate digital Blackface to sell their brand of diversity. Black bodies remain the blueprint, but never the beneficiary. It is the minstrel show uploaded into the cloud.

And so we are left with this: our presence online is a double-edged sword. We are visible, but not seen, loud, but not heard, used, but not credited. We exist inside a platform that consumes us, then silences us for speaking too loudly about the way we are consumed.

What emerges is a kind of Digital Jim Crow, a system that separates and stratifies without signs, without water fountains, without laws to point to. Just ghost codes, silent removals, and closed doors in the form of closed visibility metrics.

This is not just about hurt feelings, it is about power. Because in a world where attention is currency, to be erased is to be made poor. In a world where surveillance is policy, to be hypervisible is to be at risk. The Black user becomes a paradox, desired, but unwelcome, necessary, but unprotected. And yet, we remain.

We bend the tools, we create new dialects the algorithm can’t follow, we build shadow communities and coded language and use the very platforms that erase us to birth new ways of being, we go live, we glitch, we ghost, we remix.

Because we have always known how to hide maps inside music, how to build freedom in plain sight. Still, let us be clear: this is not liberation—it is adaptation, and adaptation is not justice. At least, not yet.

Empire never dies, it mutates, it puts down the sword and picks up the sensor, it trades the crown for a logo, the colony for a user base, the overseer for a server rack. But, the violence—the extraction, the hierarchy, the hunger, remains.

What we now call Big Tech was never just about innovation. It has always been about empire. A quieter, more elegant one. One that does not conquer through boots on the ground but through terms of service. One that claims to connect while it catalogues, that mines not gold, but attention, not bodies, but data. And still, the bodies remain.

Behind every sleek device, there is a chain of suffering so carefully hidden it becomes invisible. Black and brown hands mining cobalt in the Congo so our batteries can hum. Children pulling lithium from the earth, their fingers coated in blood and dust. Call center workers in the Global South absorbing the worst of the internet to keep it “safe” for first world consumers. Gig workers tracked like cattle. Every “smart” device rests on a dumb, brutal truth: someone somewhere had to be exploited to make it work.

This is not an accident of capitalism, it is its blueprint. As Cedric Robinson taught us, racial capitalism requires a hierarchy of human worth. And in this system, Blackness, whether in Harlem or Haiti, in Oakland or Accra, is never the beneficiary of the future—it is the fuel.

Meanwhile, the same tech giants that promote rainbow logos in June bankroll tools of war and surveillance. Microsoft supports predictive policing. Amazon sells facial recognition to ICE. Palantir feeds data into carceral systems with the precision of a scalpel. Google works with the Pentagon. The pipeline between Silicon Valley and state violence is not a bug, it is a partnership.

When uprisings happen, when our people flood the streets to mourn and to rage—the algorithm watches. It scrapes our faces, collects our chants, maps our movements, and then it hands all of it to the state. They call it public safety. We know it as counterinsurgency.

Even the platforms that claim to be ours where we gather, grieve, organize—become tools of surveillance. A livestream becomes evidence, a hashtag becomes a dossier, a tweet becomes a case file. And still, we are asked to trust the system. To believe that diversity in tech will solve what was built on domination. To believe that hiring a few Black faces will rewrite the machine’s purpose. But what is the use of a Black face on the plantation if the plantation still stands? We do not want a seat at the table if the table feeds on our flesh. We want a new table or no table at all.

This is the role of empire in the digital age: not to control you with chains, but to seduce you with convenience. To track you, mine you, feed off your joy, erase your pain, and to call it innovation and sell it back to you at 9.99 a month. But we have learned to read the fine print. We have learned that behind every algorithm is a question of power. And the real question, perhaps the only question—is: who does this tool serve? Because if it does not serve the people, it serves the empire. And the empire has never served us.

We have always known how to live inside the machine without becoming it. We’ve built sanctuaries out of scraps, harmonies from hunger, freedom from frequencies too holy for the empire to catch. If the algorithm is the new overseer, then resistance is our jailbreak, and we are brilliant at escape.

To speak of Black resistance in the digital age is not to fantasize about utopia. It is to witness the ordinary miracle of what we build despite—despite the erasure, despite the code, despite the ever-evolving plantation humming behind the screen.

Take Joy Buolamwini, the Ghanaian-American computer scientist who stared into the machine and saw it could not recognize her face. So she built something that could. The Algorithmic Justice League, born from that refusal, became not just a watchdog, but a visionary force—insisting that code is not neutral and that bias is not benign.

Or Mutale Nkonde, who asked not only what the algorithm sees, but why it sees the way it does. Through initiatives like AI for the People, she repositions policy not as red tape but as defense, armoring Black life with data dignity and digital rights.

There is Data for Black Lives, a collective of scientists and technologists refusing to let numbers become nooses. They turn metrics into medicine. Spreadsheets into abolitionist tools. They understand that to wield data is not enough—you must liberate it from the logic of harm.

And still, there are artists—those who break the machine not with code, but with beauty. Sondra Perry glitching Black bodies until surveillance shudders. Rashaad Newsome queering AI into ballroom, into choreography, into refusal. American Artist, whose very name is protest, creating installations where Blackness refuses to be indexed, refuses to be made legible on imperial terms.

These are not tech reforms. They are spiritual technologies, acts of reprogramming that say: we are not yours to predict, not yours to flatten, not data points on your map to profit. Even the smallest gestures—a burner account for safety, a group chat for organizing, a new vernacular for slipping past the algorithm’s eye—are acts of genius. Every time a Black kid builds a private server to find kin. Every time a protester scrambles their metadata. Every time we make a meme that cuts sharper than policy, joy becomes a firewall, community becomes encryption. Because Blackness in digital space is not just content, it is a signal, a strategy, a spell. And, we are casting them daily.

We are not just resisting surveillance. We are building parallel architectures of care. We are not waiting for the future. We are writing it—line by line, beat by beat, glitch by glitch.

The masters’ tools will not dismantle the master’s house, but perhaps they can be melted down, reformed in fire, reshaped in love. Because survival is not the end goal. And resistance, while necessary, is not the whole of our story. We were not born merely to outlive oppression. We were born to reimagine the world. So the question becomes: What does a technology look like when it is not designed to manage, predict, or punish—but to heal? What happens when we build with intimacy, not efficiency, as the north star?

The tech industry would have us believe that representation is the revolution. Hire more Black engineers, diversify the pipeline, make the interface more “inclusive.” But integration without transformation is assimilation. And, Black faces on violent systems do not make those systems holy. They simply diversify the harm.

What we need is not better representation within extractive systems. What we need is new systems entirely—rooted in community governance, radical transparency, and consent.

Imagine:

A platform where users own their data—where memory is sacred, not monetized.

An internet governed like a neighborhood council, not a Fortune 500 boardroom.

Technology that listens like an elder and responds like a cousin—slow, deliberate, accountable.

In fact, we already have blueprints. In the speculative fiction of Octavia Butler, who warned us and dreamed us forward. In the abolitionist design work of Maya Indira Ganesh and Ruha Benjamin, who remind us that what we call innovation often mirrors the old chains with sleeker metal. In the healing rituals of Black trans technologists, who do not separate code from care, security from softness. This is not fantasy. It is already happening, on the margins, in the whispers, in the code shared late at night between people who’ve known what it means to be unprotected and still chose to protect each other anyway.

The future must be built not in the image of empire, but in the image of us. Black, queer, disabled, undocumented, poor—not as afterthoughts, not as filters, but as the architects. Because when we build, we build sanctuaries, not surveillance. And if the masters' tools cannot serve us, we will craft new ones, with love encoded in every line. Because freedom is not a glitch, it is the original dream.

We are living in the afterlife of too many empires. And still, we build. We build because ruin is not the end. It is the beginning that dares to be honest, where the mask falls, where the wires are exposed, where the lie of neutrality breaks apart like brittle glass and we are left not with illusion—but with truth. And in that clearing, we do what we have always done. We gather, we patch, we conjure.

The digital landscape may have been seeded in the logics of extraction, but the soil is shifting. And Black hands—tender, trembling, steady—are planting something new. We are building not for scale, but for soul, not for speed, but for safety, not for optimization, but for belonging. We are building technology that remembers. Not just our preferences, but our people. We are building algorithms that forget what they were taught and instead learn to listen. We are building spaces that move at the pace of healing, not at the pace of profit. And we are doing so together.

Because the only firewall that has ever truly worked has been community, the strongest encryption is love in motion, and liberation will not be streamed, but it will be coded in rhythm, in refusal, in care.

To those who build: build like we matter. To those who code: code with the memory of the ancestors at your back. To those who imagine: imagine past the edge of what this system can hold. Because the master’s house is burning, the tools are failing, the algorithm is trembling under its own weight. And here we are, in the smoke and ash, holding blueprints. We are the architects now, so let’s build like it.

Bibliography x Further Reading

Books & Articles:

Cedric J. Robinson, Black Marxism: The Making of the Black Radical Tradition

Ruha Benjamin, Race After Technology: Abolitionist Tools for the New Jim Code

Safiya Umoja Noble, Algorithms of Oppression

Simone Browne, Dark Matters: On the Surveillance of Blackness

Virginia Eubanks, Automating Inequality

Shoshana Zuboff, The Age of Surveillance Capitalism (with critical lens)

Reports & Projects:

Joy Buolamwini & Timnit Gebru, “Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification” (MIT Media Lab)

Data for Black Lives – https://www.d4bl.org

AI for the People – https://www.aiforthepeople.org

Algorithmic Justice League – https://www.ajl.org

Documentaries & Visual Media:

Coded Bias (dir. Shalini Kantayya, Netflix)

The Great Hack (dir. Karim Amer, Netflix)

Un(re)solved (PBS Frontline – re: surveillance of Black activists)

Black Artists & Thinkers Featured:

Joy Buolamwini – Computer Scientist & Poet of Code

Mutale Nkonde – AI policy expert, founder of AI for the People

Sondra Perry – Digital performance & installation artist

Rashaad Newsome – Artist blending machine learning with Black cultural performance

American Artist – Conceptual artist interrogating Blackness and systems